An OSCE exam is a typical type of examination (first described in 1975) that is often used in health sciences (e.g. medicine, physical therapy, nursing, pharmacy, dentistry) to test clinical skill(s) performance and competence (knowledge, skills and attitudes) such as communication, clinical examination, medical procedures/prescription, clinical decision making, clinical thinking/reasoning, exercise prescription, joint mobilization/manipulation techniques and/or interpretation of clinical outcome results.

The OSCE examination was developed in 1975 by Ronald Harden, Professor of Medical Education (Emeritus) at the University of Dundee. It was first outlined by Professor Harden in a journal article in The British Medical Journal (BMJ) called Assessment of clinical competence using objective structured examination.

The OSCE is now a universally-accepted standard exam in higher education assessment.

What does OSCE stand for?

OSCE stands for Objective Structured Clinical Examination.

Objective refers to scoring of multiple examiners in different stations resulting in a more objective score at the end of the OSCE.

OSCE scenarios and assessment forms should be standardised across multiple stations. Each station within an OSCE should focus on an area of clinical competence. Subjective scoring plus feedback takes place for each station will bring to the fore what a student does well and what areas a student has shortcomings. The overall score of multiple examiners therefore is ‘more objective’ and the feedback provided is the most powerful tool to progress student’s learning.

Structured refers to the standardised consecutive format and procedures of the time constrained station based exam.

Students should experience the same scenario within a station and each student must perform the same task within a fixed time duration. Each student should encounter the same level of difficulty and the same assessment form is used for each student.

Clinical refers to the fact that clinical skills are being assessed during the OSCE and the encounter is very much clinically based.

An OSCE station represents clinical situations that reflect what happens within healthcare work settings. Each station should look to challenge students to apply their clinical knowledge and skills as they work through each scenario encountered.

Examination is self-explanatory.

An OSCE offers a reliable way to assess a student’s competence across a range of high stakes scenarios.

How is an OSCE implemented?

When setting up an OSCE the following aspects must be considered:

- The number of stations to be used across the examination to create an OSCE circuit whereby a number of 15 – 18 stations is considered as ‘objective’. Students then rotate through a series of timed stations in sequential order.

- At each station, students will have to work on a different clinical scenario. An example scenario may be: “A 44 year old man presents himself with a complaint of headaches. Obtain a complete history of this complaint”.

- Each station often assesses multiple aspects of a student’s clinical competence across communication, clinical examination, medical procedures/prescription, exercise prescription, joint mobilization/manipulation techniques and interpretation of results.

Many global universities have published articles on instances of successful OSCE implementation such as Kaohsiung Medical University’s 2007 article in The Kaohsiung Journal of Medical Sciences on running such an exam.

What is an OSCE station?

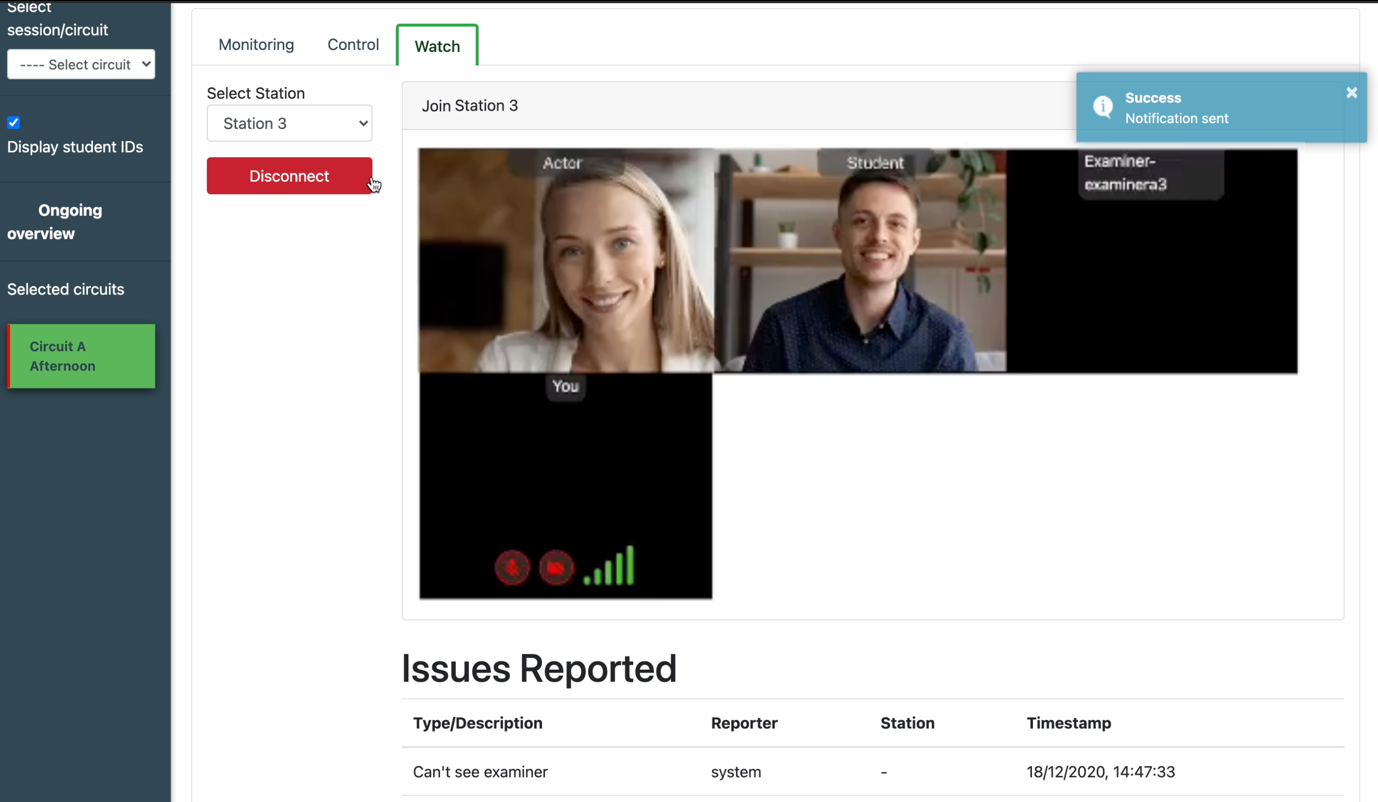

An OSCE examination consists of a number of different scenarios, all which exist in a different station. The OSCE progresses as candidates move from station to station, engaging in different scenarios with mock patients (actors). They are assessed by examiners at each station and receive a score based on performance. Examiners mark the score on an assessment platform such as Qpercom Observe.

An OSCE station in a remote OSCE being conducted using Qpercom Observe

Candidates or students are given information about the upcoming scenario before they enter a station and are allowed 2 minutes to prepare for the assessment. The information given to the candidate will outline the age and gender of the patient they are about to meet in the station and the medical complaint that they have.

The length of the station varies and depending on the institution running the OSCE, the station can last any length between 5 minutes and 15 minutes and even sometimes longer.

How is an OSCE graded?

OSCE exams are graded using a structured marking scheme where candidates are scored against various specific or more generic criteria in each station. The examiners will mark your score as the scenario plays out on paper, if the institution is still using paper-based assessment, or digitally on an OSCE assessment tool like Qpercom Observe.

What is the pass mark for an OSCE?

The passmark for an OSCE is, in general, the average ‘observed’ score of each individual station. Depending on the university, the approach of calculating the OSCE score might differ. In the past few years, Schools of Medicine tend to use Borderline Regression Analysis (BRA) to, better than before, incorporate the degree of difficulty of the stations and the professional opinion of the examiners into the score. The pass mark is adjusted through BRA matching the Observed (raw) score with the Global Rating Score (Fail, Borderline, Pass, Good or Excellent) of the examiner. The pass score might move up or down depending on the BRA.

Watch an OSCE video

Watch a video of two students and an actor-patient playing out an OSCE scenario as part of a station known as ‘Breaking Bad News’.

In this video, the students must deliver the news to a male patient that he has Rheumatoid Arthritis. This is an example of a good performance in an OSCE station.

The examiners use Qpercom Observe to grade the student on their performance.

Everything you need to know about OSCEs

Still itching to find out as much information as you can about OSCEs? Don’t fret. Many students, especially those starting medical school for the first time, are in the same position.

In fact, in this video below by Bilal Ali, a student at the University of Liverpool, he tests some of his fellow students on their knowledge of OSCEs. Their responses are diverse, with some knowing exactly what the purpose of OSCEs are while others are still left wondering what OSCE really stands for.

There’s also some really handy tips for preparing for your OSCE and advice from students who have been through their exams already.

Preparing for an upcoming OSCE?

If you’re preparing for an upcoming OSCE and need some tips and advice, check out our five tips for preparing for an OSCE.

One of the most effective ways to prepare for an OSCE is to take a Mock OSCE to test and improve your skills before the real thing.

Mock-OSCE.com offer fully live and remote Mock OSCEs with real patient actors, results and feedback to help you refine your performance.